The Echo: an AI Experimental Film

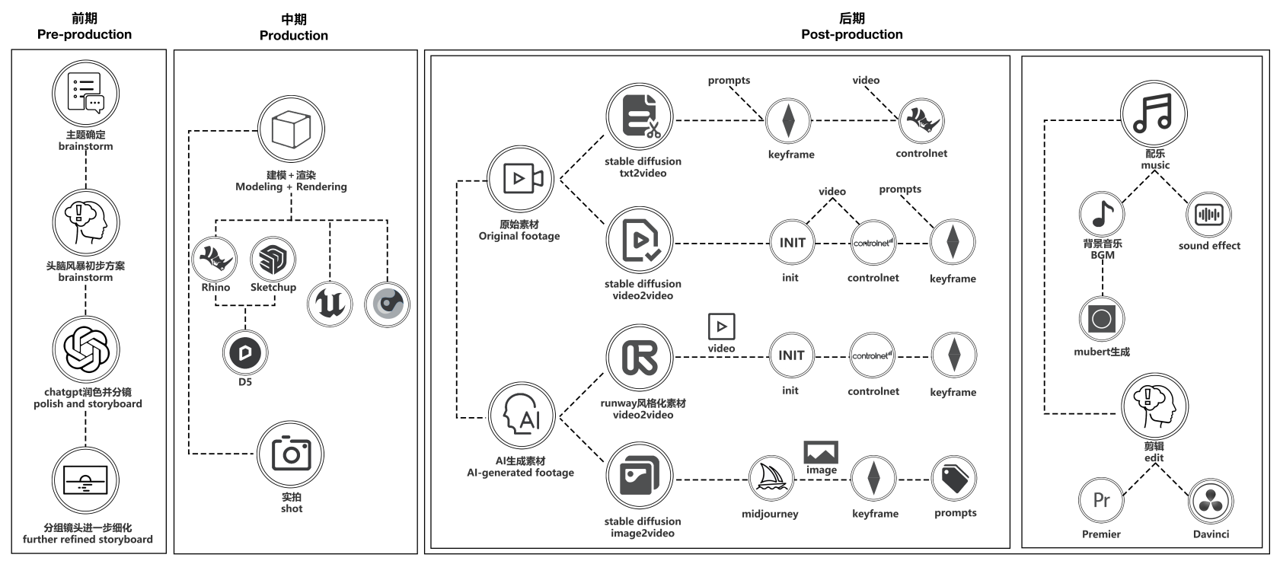

Leveraging the latest generative AI technologies (stable-diffusion, Runway, ChatGPT, Midjourney, Mubert), combined with live-action footage, 3D modeling, Unreal Engine, video post-production, and music/sound effects, we aim to refine an AI video production workflow. Our goal is to ultimately complete an AI art film incorporating these diverse technologies and software.

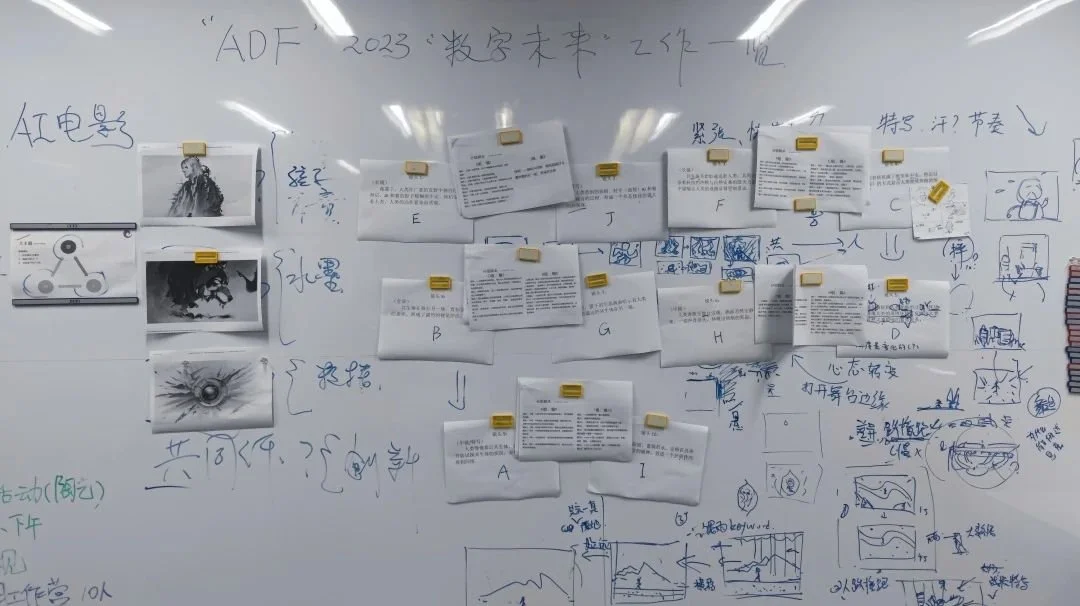

Group Project: Architecture Digital Futures 2023 Summer Workshop (Artificial Intelligence Multidimensional Spatial Narrative Image Generation Methods and Practices)

My Role: Leader of Group G, Storyboard Script, Scene Design, AI generantion

Year: 2023

Location: Shanghai, China

Introduction

"The Echo" is an experimental short film that utilizes artificial intelligence art generation techniques. The film explores the symbiotic and conflicting relationships between humans, technology, and nature, telling a metaphorical story with poetic language to reflect on the relationship between humans and the environment and our existence on Earth.

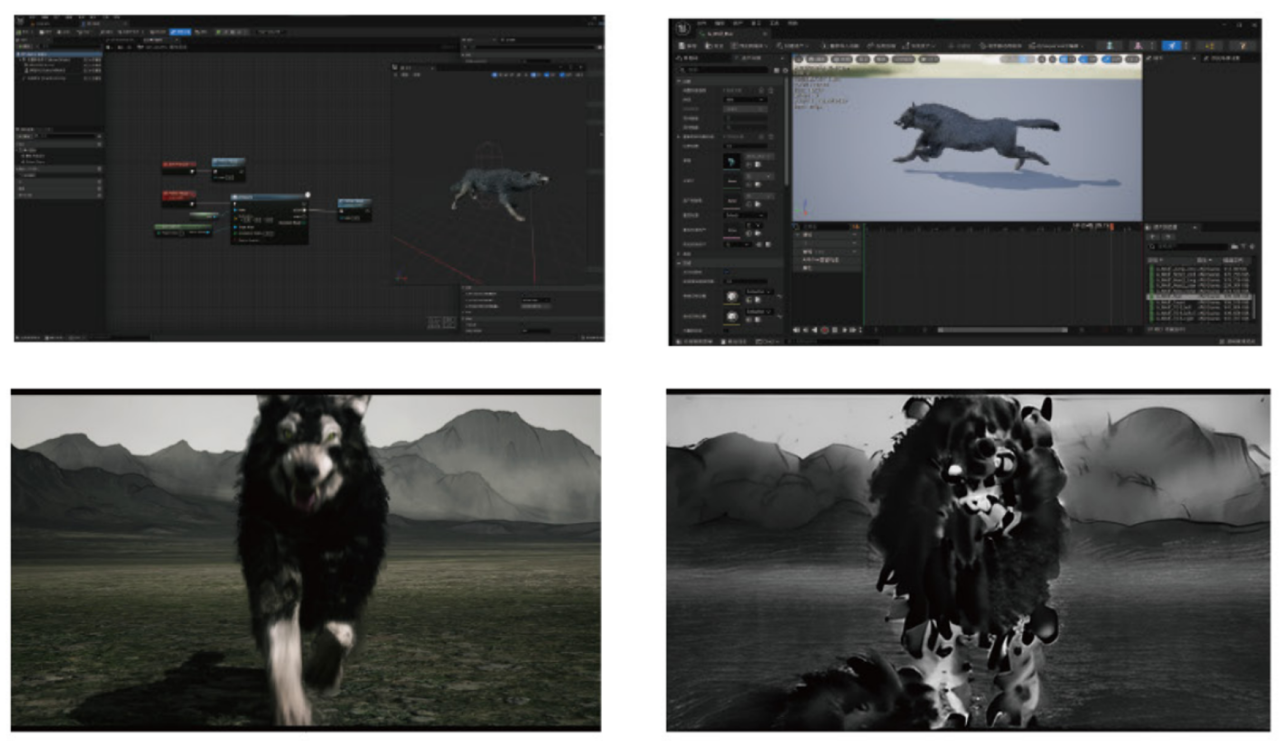

In a dim jungle, a person runs swiftly, pursued by wolves and a spherical AI, their fear magnified with each glance back. As the chase intensifies, the wolf and sphere merge into a formidable symbiote, embodying a mix of natural and technological forces. Amidst the chaos, the person confronts the symbiote, and as fear dissipates, a moment of mutual recognition unfolds, suggesting a deeper connection between human, nature, and technology.

The film's creative process embraces artificial intelligence as a distinct creative entity. Using ChatGPT for script development, live footage combined with AI tools like Stable-Diffusions and Runway for visual creation, and Mubert for AI-generated music, the film navigates the dynamic interplay between human creativity and AI’s capabilities.

"The Echo" contemplates the fragile equilibrium among humans, AI, and the environment. It envisions breaking down barriers, fostering a new coexistence that blends technology, nature, and society into a unified whole, steering towards a future of mutual symbiosis.

Post-Production

Workflow

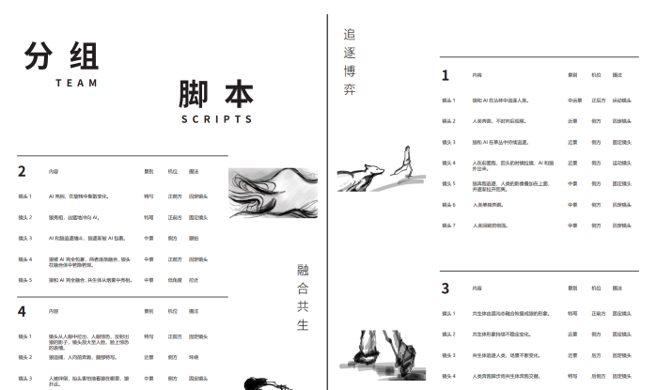

Script

Material Production

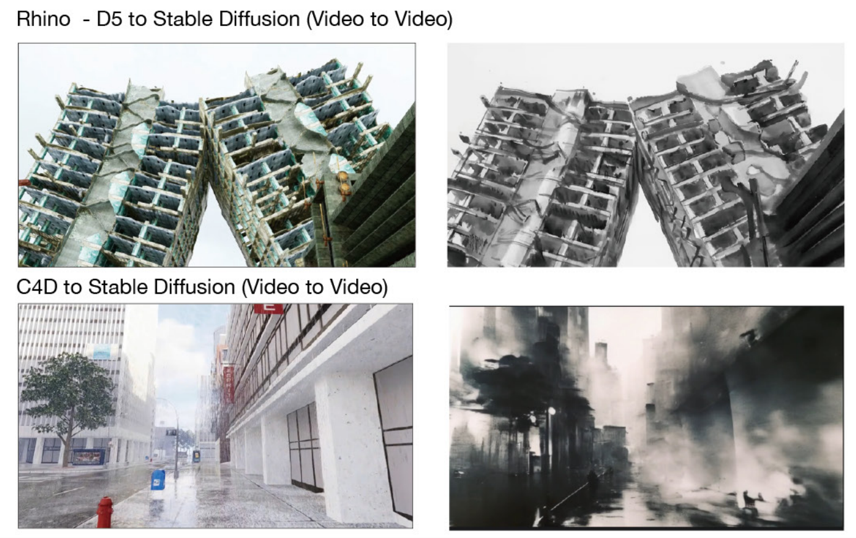

Modeling and Rendering

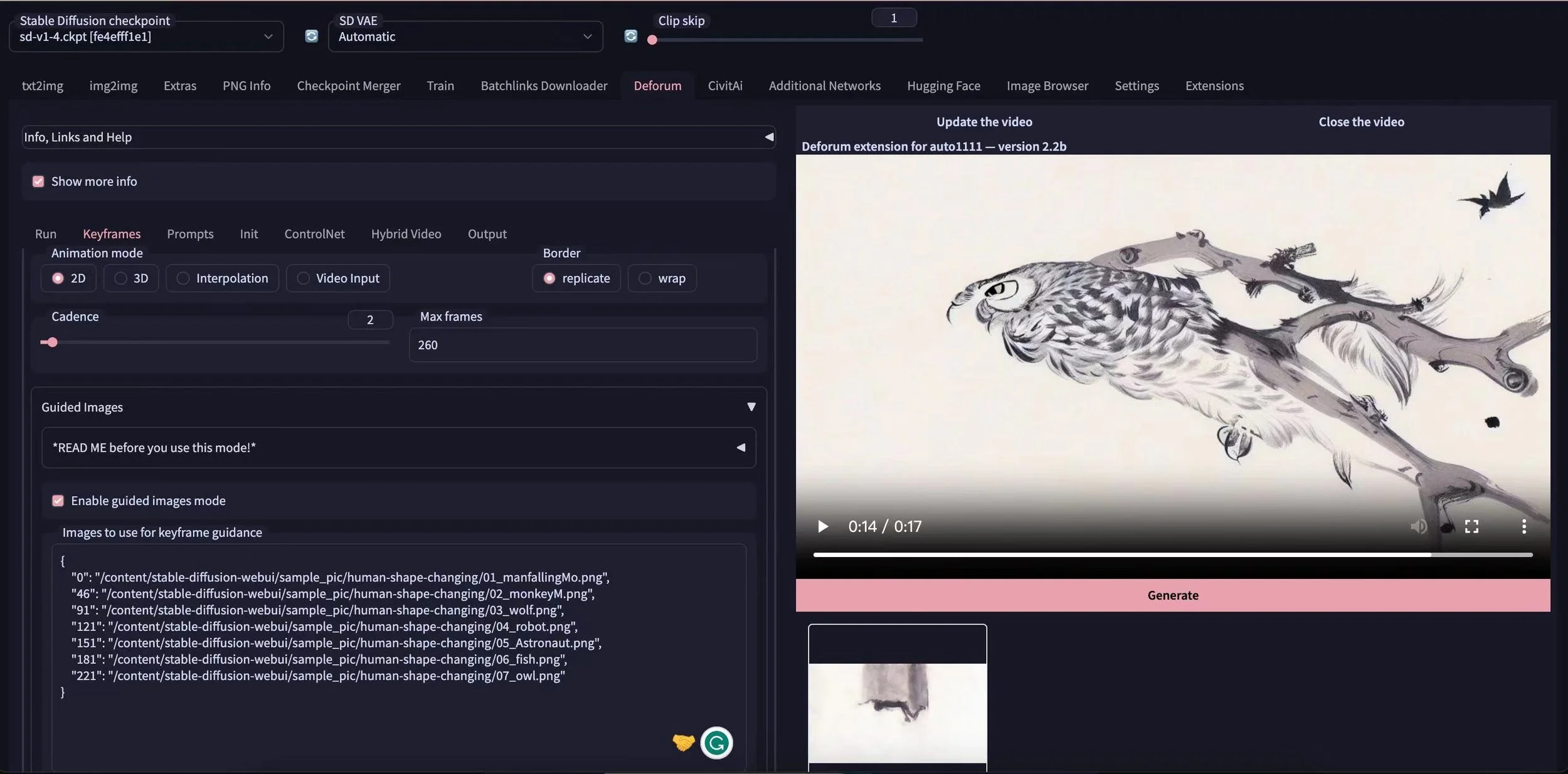

Video to Video

Group

Text to Video

Live-action footage is used, followed by AI generation and stylization with Video To Video.

Video to Video: Virtual production materials are created using DCC software and Unreal Engine for scene creation, animation, and rendering, followed by AI generation and stylization with Video to Video.

Text to Video: Directly generate videos from text using AI.

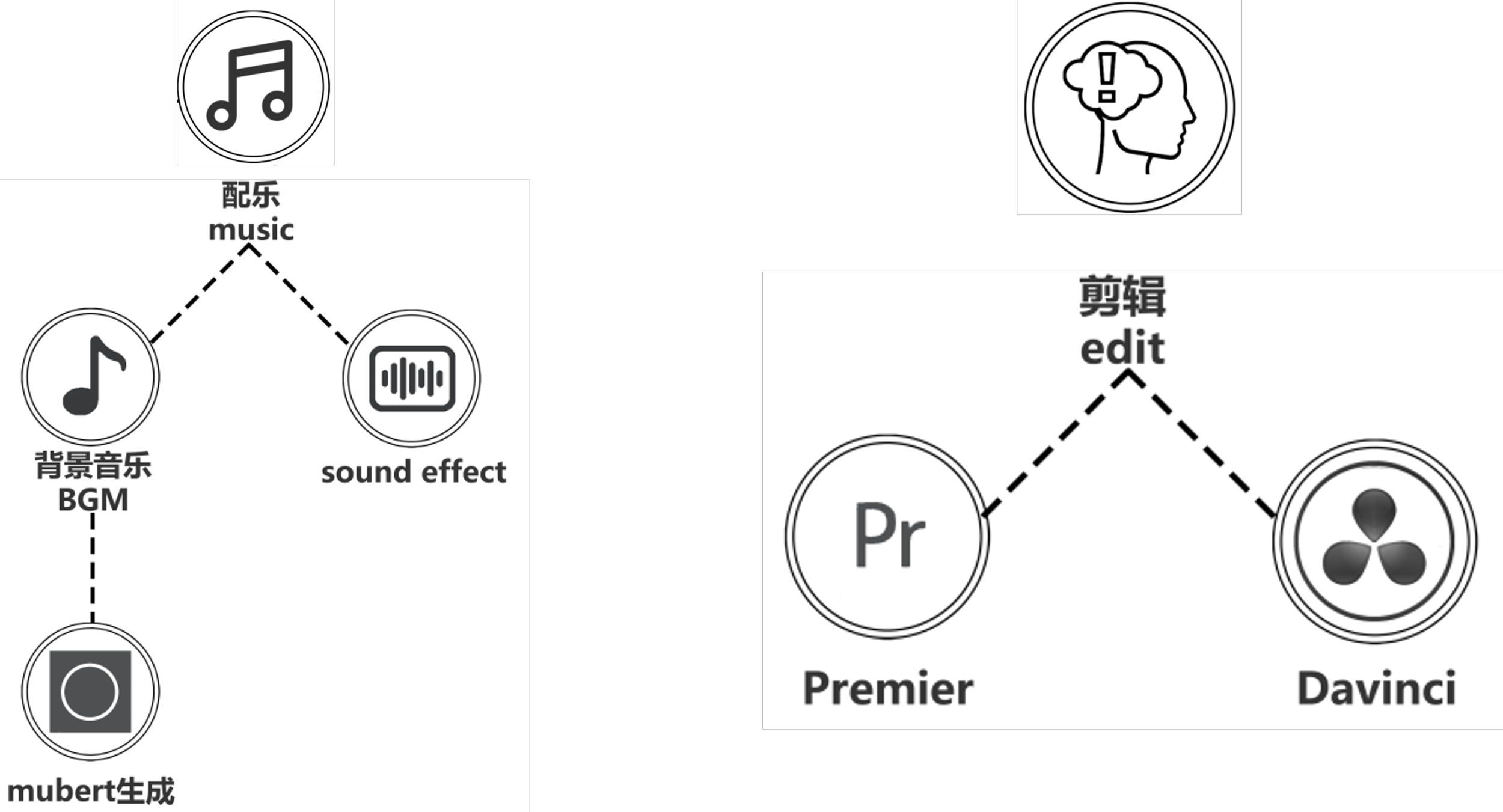

In post-production, background music is generated using the Mubert tool through AI. Sound effects are produced using recording, sampling, and other methods.

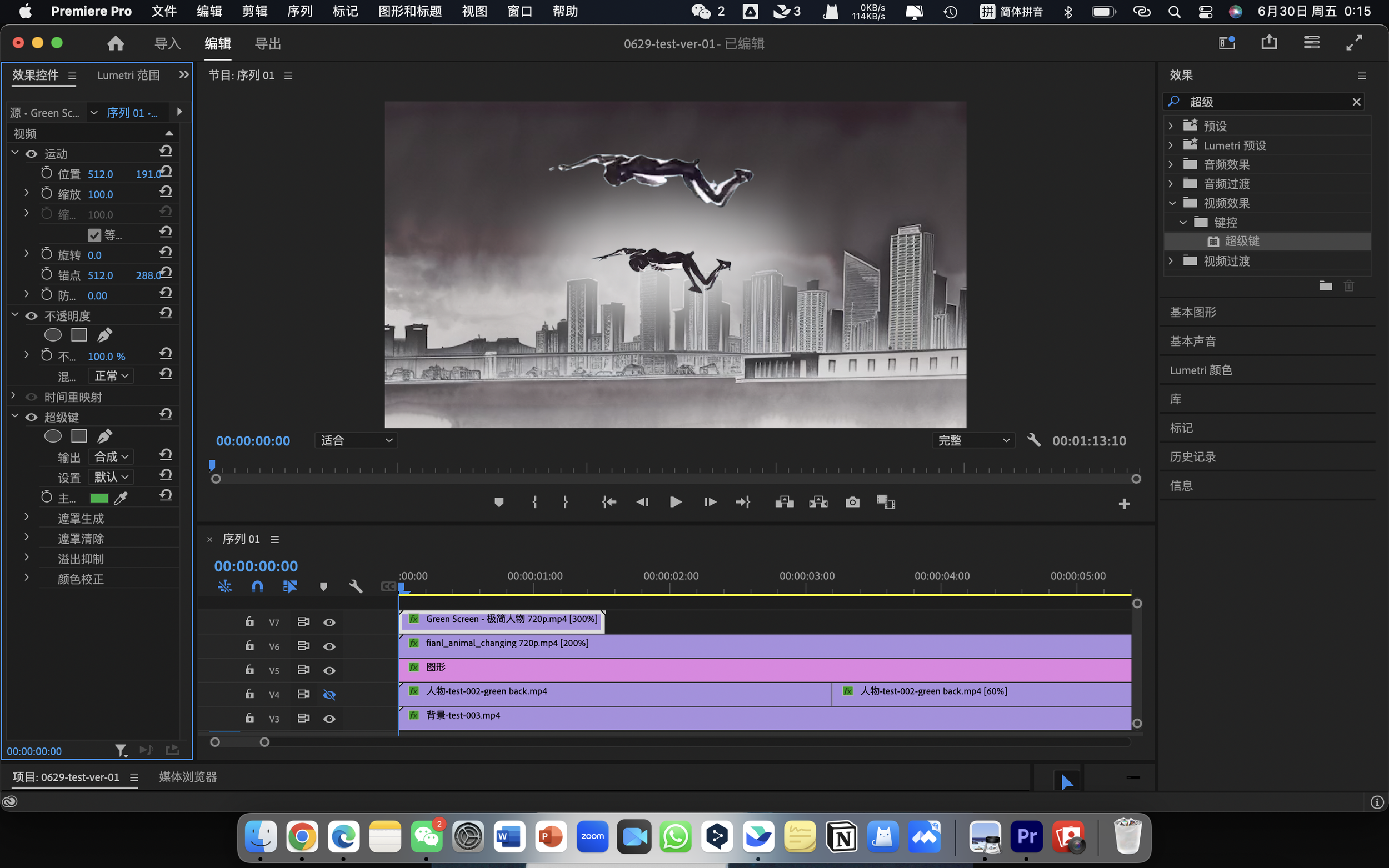

Finally, video editing and post-production compositing are carried out, with the resolution of the final piece enhanced using Topaz software.

Exhibition

Selected for the "2023 Shanghai Urban Space Art Season (2023 SUSAS)."

Workshop

Mentors: Xingze Yu | Xiao Luo

Teaching Team: College of Architecture and Urban Planning, Tongji University | International School, Central Academy of Fine Arts